Introducing the b(AI)ndScores Winter Guard Score Prediction Machine

WGIWINTER GUARD

3/14/20254 min read

Oh hey there! It’s been a minute since I updated the blog. And understandably so - the winter guard, percussion, and winds season has been in full swing for a few weeks and is almost moving too fast to keep up. As of writing, we’re only three weeks away from the start of the WGI Color Guard World Championships and four weeks away from Percussion & Winds World Championships.

The winter season has, by far, become the busiest time of year for bandScores in terms of both content and site traffic. Pointing back to the 2024 Year in Review, winter guard scores made up the large majority of scores we hosted on the website. That will definitely be the case again this year as the 2025 Score Hub has already surpassed 10,000 scores with plenty more to be added in the final weeks. In terms of traffic, the website is far outpacing the number of visitors that it saw this time last year.

That all said, there’s been limited time to focus on other projects while score and data maintenance has taken the main priority. With that limited time available, I’ve been going back and forth about the idea of making predicted rankings with the b(AI)ndScores model that was used for the 2024 DCI and BOA seasons. Those rankings were popular and well received but it felt redundant to make similar rankings for winter guards when WGI already publishes standings for groups attending World Championships.

Why are there no b(AI)ndScores Rankings for the winter guard season?

Furthermore, I discovered that it’s incredibly difficult to predict scores for World Championships based on results from regionals earlier in the season. When building a few test models, the initial accuracies range in only the 70-72%. After a few tweaks and iterations, I was able to get accuracy as high as 78%. Regardless, when testing with historic scores, this accuracy translated to predictions that were +/- two points from the actual score in only a little over 50% of scores. This accuracy was a far cry from the >90% accuracy that was achieved in the models for DCI and BOA.

A difference of two points can translate to a huge swing in placements in an activity as competitive as winter guard. Case in point, in last year’s Scholastic A semifinals, less than two points separated the guards that finished 11th and 21st overall (the 21st place guard was first out of finals). It felt risky to publish rankings knowing that a two point swing could mean that a guard that was ranked safely in the middle of the pack of finals could miss altogether.

This eventually led to my next thought - if I wasn’t going to publish predicted scores, was there a way I could at least publish the model and instead put into the hands of anyone to predict their own scores? This is actually an idea I had been thinking about for a while and not even necessarily for WGI scores - I thought it would be really cool to build an app or tool that generated predictions based off input information, either for real or hypothetical scores. And today, I’m happy to share that the idea has turned into reality.

Introducing a first of its kind tool

The Winter Guard Score Prediction Machine is the first of its kind in the marching arts community, allowing users to generate score predictions entirely with their own data with the help of the b(AI)ndScores model. Additionally, unlike previous predictions that were generated solely with real data, this new tool allows users to create predictions with possible or hypothetical future scores. Another new feature is that the machine will also generate a guard’s predicted probability of advancing to finals at World Championships in their given class.

You may notice that the model only predicts scores but not placements. This was done on purpose given that scores vary widely from class to class and even year to year. A perfect example are results in Scholastic World from the past few seasons. In 2022, Avon HS won the class with only a 93.95 which was the lowest score by a Scholastic World champion since 1993. Two years later, Avon won Scholastic World again, but this time with a new class record score of 99.35. Given this variance, it would be unfair to say that a given predicted score will finish in one particular placement.

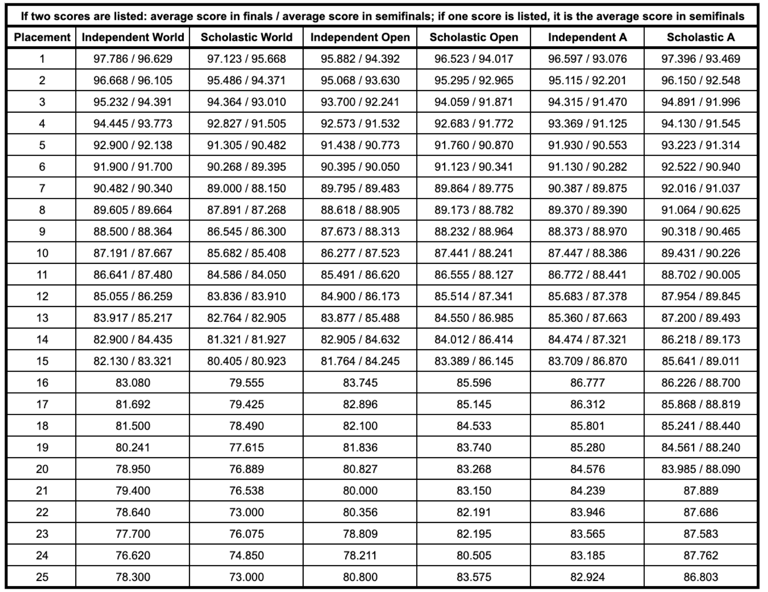

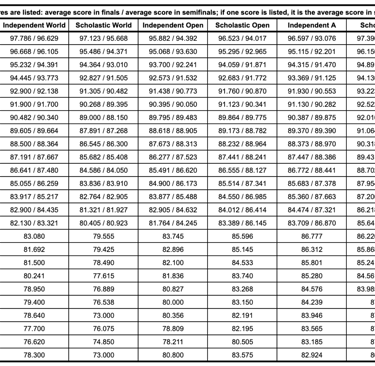

That being said, here’s a table of average scores by placement for each of the six classes at World Championships since 2012. You can use this as a guide to determine approximately where a predicted score may place in a given class.

Again - winter guard scores are very hard to predict

Remember earlier when I said the WGI models only achieved 78% accuracy at best? That’s definitely still the case with the model behind the prediction machine so any generated results should be taken with a grain of salt. Ultimately, the point about winter guard being an incredibly competitive activity can’t be stressed enough. You’re talking about cases where only 20 guards advance to Scholastic A finals out of a pool of 140 and multiple instances of guards that had low scores at regionals but still placed well in finals. Simply put, no amount of math or additional data points can accurately predict results based mostly on subjective opinions.

That said, I hope you find this new tool both useful and fun! It may be tempting to create whacky predictions with wildly impossible scores or scenarios and I definitely encourage you to do so if you want! Alternatively, use it to create a number of hypothetical scenarios to figure out everything your guard needs to accomplish to have a realistic shot at making finals. You know the popular Pepe Silva meme from It's Always Sunny in Philadelphia that's often used for silly conspiracy theories? You can absolutely imagine yourself as that meme when using the machine.

Happy score predicting!

The online resource for competition data in the marching arts activity.

About & FAQ | Contact us | Privacy Policy | Support us on Buy me a Coffee | Follow us on Instagram